The AI Equation

What $NVDA’s $80B forecast means (and doesn’t mean) for cloud AI revenue, and why 2024 could be the inflection point

In early 2023, I introduced what I called the "AI Equation" to measure ROI across the GPU-to-revenue pipeline. Over the year, this framework has gained traction across investment circles.

Reflecting on 2023, however, the thesis hasn’t played out as expected. So—does it still hold? Here's my honest review, updated analysis, and thoughts on what's next.

🧮 The "AI Equation" Explained

1⃣ GPU to Cloud Revenue

Thesis:

$NVDA forecasted $46B in data center revenue for 2023 and $80B for 2024

Assuming a 14-month payback period, this should drive $20–40B in AI revenue for CSPs

Reality Check:

Top 3 CSPs reported < $3B in AI revenue for FY23

FY24 consensus for cloud growth: $230B, a $40B increase (+20% YoY) — AI doesn’t clearly show up in that number

2⃣ GPU to End Application

Thesis:

If GPUs are a 20% cost component, then an $80B GPU investment implies $400B in end-user AI revenue

Reality Check:

Actual ‘AI revenue’ (from public companies + startups) is < $3B today

Satya Nadella joked, “If we trust the GPU forecasts, US GDP should 3x”

Clearly, he's doing similar math.

🤔 Why Hasn’t Cloud AI Revenue Shown Up Yet?

1⃣ Time Lag & Scaling Complexity

Engineering friction: Ramping H100 clusters has been harder than expected — $MSFT and $ORCL have acknowledged major operational hurdles

Misunderstood timelines:

H100 delivery ≠ general availability (GA)

GA ≠ live-at-scale

Today, most CSPs are at limited GA, not full utilization

2⃣ AI Revenue Definitions & A100 Limitations

A100 = small base: ~$5–10B of $NVDA’s $46B FY23 data center revenue

That yields < $2B in CSP AI revenue

A100 ≠ growth driver:

Been available since 2020

Doesn’t contribute to FY23 YoY revenue bump

May explain why $AMZN disclosed only that its "AI rev is in line with $MSFT," without any growth commentary

💡 So Where Are We Now?

The AI Equation thesis is still intact.

Here’s what’s changed:

We should see a lot more H100s live at scale in 1H24

This could drive $30B in incremental AI revenue for CSPs

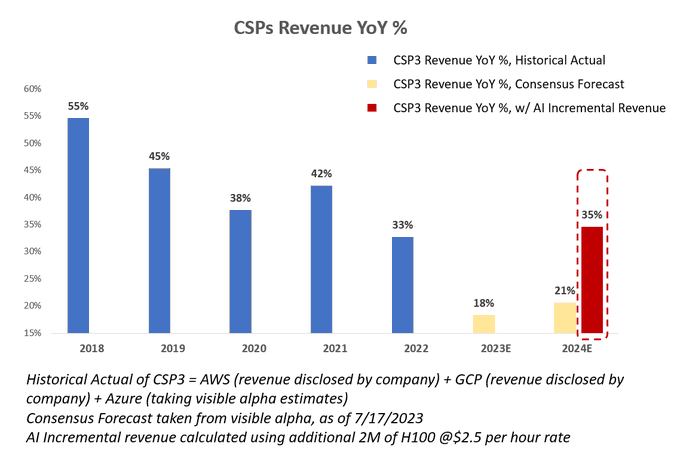

➝ Which would double growth to ~35% vs. consensus of 20%

📈 Cloud Growth Re-Acceleration: Quiet but Massive

The public cloud is quietly entering a renaissance, driven by GPU spending—and no one’s talking about it.

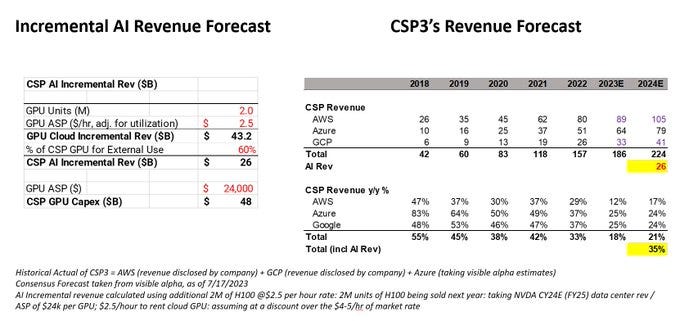

Key Assumptions:

2M units of H100 sold in 2024

$80B NVDA data center revenue / ~$24K ASP = ~2M GPUs

$2.5/hour GPU rental

Below market rate of $4–5/hr

Yields $43B of potential cloud revenue

Assuming 60% of usage is external → $26B in incremental CSP revenue

📊 Growth Math: Rewriting the Forecast

2023E revenue for $Azure, $AWS, and $GCP = $186B

Consensus 2024E growth = +21%

Add $26B from H100-driven revenue → Total = $212B

New growth rate = +35% YoY

⚠️ Assumptions to Watch

Is GPU-driven revenue truly incremental or cannibalizing other workloads?

Is there unlimited AI demand?

What happens post-2024/25?

Will all 2M H100 units be deployed by CSPs?

Or are some being hoarded or underutilized?

The market is buzzing with a key question:

Are we stockpiling GPUs in an arms race with no end use, or will real software demand catch up?

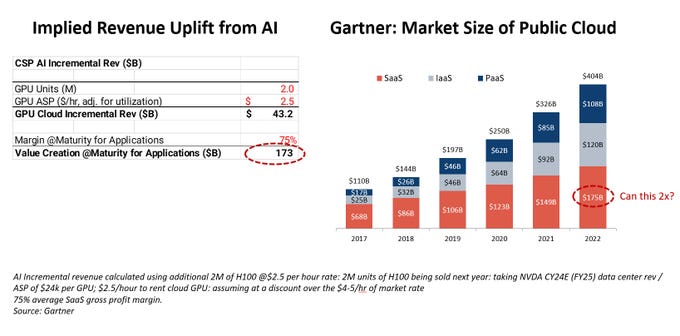

CSPs are adding 2M additional chips, equating to ~$40B in cloud infrastructure spend. But that spend only makes sense if SaaS companies can monetize it.

💸 The Math: Who Pays for All This?

To justify $40B of incremental GPU/cloud cost, the industry needs to deliver ~$170B of incremental software revenue.

Why? Because cloud infra is COGS for SaaS companies.

At maturity, most SaaS operates at ~75% gross margin.

So far, that kind of scale is hard to spot. Total software application revenue today doesn’t even reach that high, let alone the incremental amount needed.

🧪 What We've Seen So Far: $MSFT Copilot

Microsoft's Copilot pricing shows what’s possible:

Announced 53% to 240% pricing uplift depending on the SKU

Morgan Stanley projects $25B in revenue uplift from Copilot (vs. $45B of O365 revenue today)

It’s one of the few AI-native apps with clear monetization traction.

🧭 But... Where Are the Other Apps?

That still leaves us asking:

Where will the other $145B in app-level revenue come from?

Which use cases justify meaningful new pricing power or adoption curves?

Productivity, customer service, creative tools, vertical SaaS... these are starting points. But none—so far—show evidence of scale close to Copilot’s.

🤔 Who Funds the Gap?

The elephant in the room:

How do businesses—and individuals—come up with the $$$ to fund all this new spend?

AI is capital-intensive. The monetization must be real, not just aspirational.

It raises critical questions:

Will AI apps become “must-have” like email or ERP?

Or will adoption stall without clear ROI or budget headroom?

Bottom line:

Cloud providers are betting big. But until $170B+ in real software revenue shows up, the ROI of the GPU gold rush remains a leap of faith.

🧠 Final Thought

This is a trillion-dollar debate at the heart of AI infrastructure investing:

If CSPs are hoarding GPUs, is the AI revenue wave about to hit—or has it already peaked in spending?

We’re about to find out. I welcome thoughts, challenges, and alternate takes.